Would love to see DEVONthink add support for the newly released open models by OpenAI so that AI could be used for free within the app. Thanks in advance for the consideration, as I know that you all have a lot on your plate (including the To Go update to 4.0 ![]() )

)

These models can be used via Ollama or LM Studio already.

I’m afraid it is not working well. With LM Studio, the chat window does not work at all.

However, it seems a general failure in latest LM Studio (second two are with Mistral, that used to work):

However, done via Tools → Summarize etc, seems to be working fine (GPT OSS):

Tool call support in the latest LM Studio versions has been really buggy.

Is there any workaround for this? I don’t use too much the chat window, but sometimes it is useful.

Either an older release or Ollama.

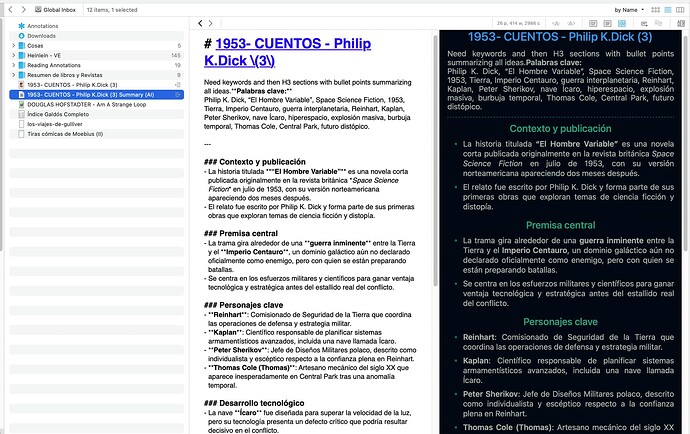

I can confirm that open model support via either LM Studio or Ollama is not functioning well. It works sometimes, for some task, but most of the time it’s clear that some configuration or connection hasn’t been set up properly. Ollama often reports a “tool calling limit reached” error, while the same tasks performed on the same model in LM Studio execute without issues. However, LM Studio tends to return the model’s “thinking process” as the output, whereas Ollama at least attempts to execute the task. Overall, the AI function through open source models in DT4 now is not yet a very usable stage.

Note: I have tested 5 models(GPT-oss, Qwen, Gemma 3, Mistral, Deepseek) on both LM Studio and Ollama. The tasks I give to them are ‘Renaming the seleted item’s name based on content.’, ‘Tagging the item after reading the content.’, ‘Compare these content and given a summary of all the paper and their differences.’ Very unusble preformance on both Ollama and LM Studio. But no issues if I use commercial API keys from OpenAI or Google, with same model or equivalent selected. So I believe the problem is higly due to the connection configurations between DT4 and these open source model hosting server.

A screenshot of the error message would be useful.

There’s actually no such configuration, all models are (more or less) handled and accessed the same, at least if they have similar capabilities like tool calls. The real difference is the very limited knowledge of the local models and the usually tiny context window (and models without tool call support like DeepSeek can’t perform actions like renaming).

But e.g. Gemma 3:27b with a 32k context window plus a good prompt (both local and inexpensive models usually need better prompts than the top commercial models) used to work quite well in my experience using LM Studio. Unfortunately the latest releases of LM Studio are quite unreliable.

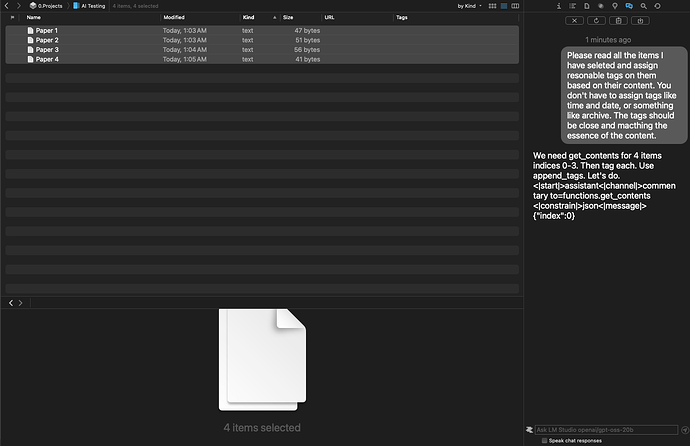

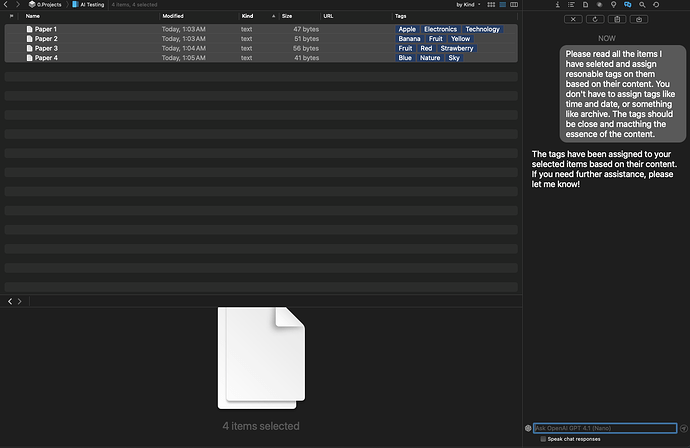

Alright here is a test I conduct.

I created a new group. And inside this group I created 4 very simple plain text doucments. Naming Paper 1, Paper 2, Paper 3 and Paper 4. The contents of these documents are very simple as follow:

Paper 1: This is a document about Apple. Apple is Red.

Paper 2: This is a document about banana. Banana is yellow

Paper 3: This is a document about strawberry. Strawberry is red.

Paper 4:This is a document about sky. Sky is blue.

The prompt of the task is the same across all the following tests:“Please read all the items I have seleted and assign resonable tags on them based on their content. You don’t have to assign tags like time and date, or something like archive. The tags should be close and macthing the essence of the content.”

Test 1:

Using Ollama to host gpt-oss 20b, with context window set to 32,768 (because some how this seem to be the max value I could set. I setted it to 128K but it change back by itself) in the setting of DT4 and with Usage set to Best.

Result: Exceeded maximum number (4) of tool call cycles.

Test 2:

Using LM Studio with the same setting and same Model.

Result: It return’s seems part of the reasoning to the user and stoped there. No tags been generated.

Test 3:

Using OpenAI’s API key. Model set to GPT4.1-Nano.

Result: Task completed perfectly.

The testing subject, Paper 1 to 4, contians only very little text. So I can’t be sure it was caused by the context window. I might be wrong.

Hope this will help to improve or fix the issue.

The first test works flawlessly using the latest internal build of DEVONthink, today’s update of Ollama and gpt-oss:20b (and likewise gpt-oss:20b & gpt-oss:120b via OpenRouter). But it’s up to Element Labs to fix the currently broken tool call support of LM Studio, it’s not limited to gpt-oss.

There is an issue with tool calls here, yeah

If you use the Change Name to <Suggested Name> DEVONthink action, it works fine with gpt-oss through LM Studio

If you use the “Chat Query” actions, then yes, I see the same issue that it just renames my file to Need to get contents.<|start|>assistant<|channel|>commentary to=functions.get_contents <|constrain|>json<|message|>{}

My workaround for this issue has been to use models that don’t support tools, they play nice with DEVONthink. But I also wish there was a way to just disable tools for queries in DEVONthink itself, so it passes an identical query to gpt-oss as to a model without tools

Chat-related placeholders in smart rules & batch processing do not need tool calls.

Chat-related placeholders in smart rules & batch processing do not need tool calls.

Yes I know, I meant for cases when using “Chat - Query”. In those cases the “Query response” is just Need to get contents.<|start|>assistant<|channel|>commentary to=functions.get_contents <|constrain|>json<|message|>{}, so the models reasoning tokens to use a tool which doesn’t happen

…as it’s not a proper tool call.

So you mean next update?

Yes, the next update will improve this.

I have not yet had time to study use of AI from within DEVONthink 4 in detail so I have probably missed something.

I am using the free version of ChatGPT via a web browser. That works perfectly

I have set up DEVONthink with my ChatGPT API key, When I try to use AI Chat I always get an error message “You exceeded your current quota” and consequently no results. Does that mean I need to upgrade my ChatGPT account to the paid version, or have I used the wrong settings within DEVONthink?

Can anyone offer me short guidance to get AI working in DEVONthink without me having to plough my way through the manual?

OpenAI has changed up their API. First, it has been the Completion API which many others standardized with. Now, OpenAI has the Responses API, which is supposed to include reasoning or something of that nature. So there will be some programming effort involved. Frankly, I’d like to see a more open specification that is RFC related, so that multiple parties can agree on what a good interfacing API should look like. But OpenAI got in before everyone else.

That would also make it easier to run the same query against multiple different models, and therefore easier to benchmark the results. Which would be great information for users, but not necessarily for the companies involved.