This is heavily dependent on the nature of your use. The cost per/million tokens is quite reasonable IMHO but if I was doing very complex things like @rkaplan, I would have a different perspective on it.

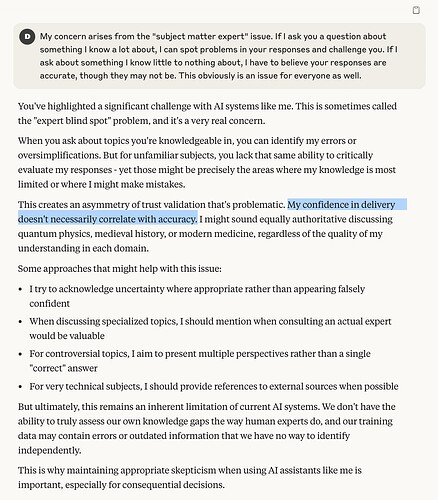

I like Claude and feel it returns the most honest and helpful replies (though yes, it’s not actually ‘thinking’ about it). Because of this I tend to ask it more pointed questions about AI. This is an exchange I had with it last week, and the selected sentence is not only beautiful constructed but it’s a sobering truth people should pay attention to…

(This was 3.7 Sonnet)

i have setup the claude console for API usage today and already spent USD 0.8 just renaming a few documents in DT. that seems unreasonable to me. and the documents were mostly just invoices.

Very accurate response! I have also noticed that some LLMs are unreliable when providing sources. They often hallucinate sources that don’t exist by authors that have never existed.

- reasonableness is subjective.

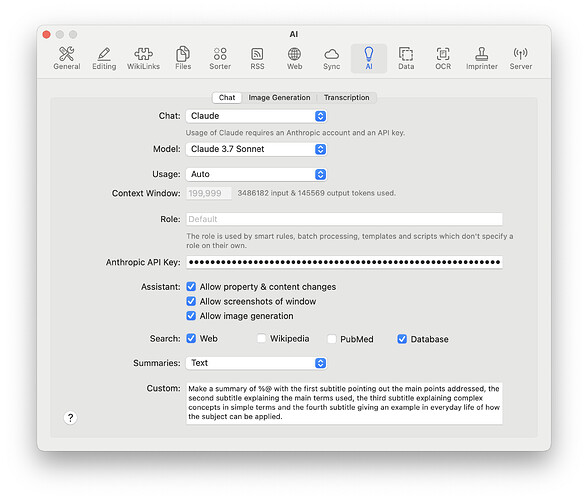

- You have chosen Best in the Usage window which clearly states it will use unlimited tokens, so… ? This is also covered in the help.

No question you want to limit the use of the expensive models to a situation where they are necessary such as very complex analysis or situations where hallucination is a particular risk ( although you can never eliminate hallucination completely).

Keep in mind that you can switch from one language Model to another both when you are using the chat tool and When you are using scripting.

So for example, you might use Claude to extract the fundamental facts or ideas from a document and then use a less expensive language model to initiate queries based upon that summary.

For basic actions like renaming, a document or listing documents meeting certain criteria, one of the less expensive language models is likely to be sufficient.

That said, GPT 4.5 at present is stunningly more expensive than any other model. That is probably the one model to be very careful to avoid unless you have a use case where that is really essential and you are monitoring the cost. I suspect very few if any DEVONthink users will want to use that modell unless the price comes down by a factor of 10 -20.

- reasonableness is subjective.

oh, blue.

- covered in help

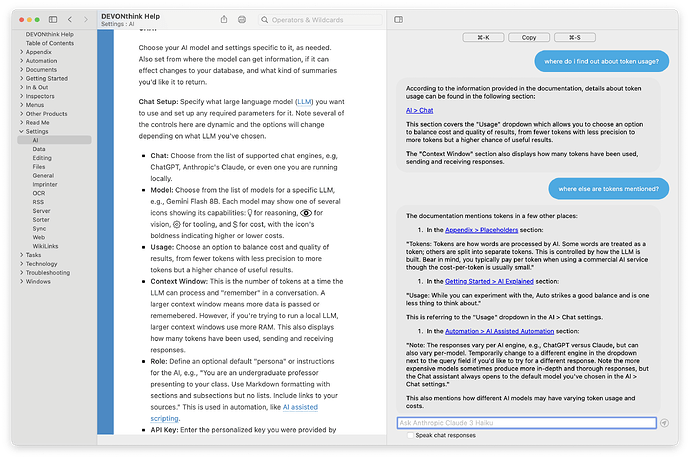

i just had a look, it is not covered (correct me if i am wrong please, ). all i could find is

Usage: Choose an option to balance cost and quality of results, from fewer tokens with less precision to more tokens but a higher chance of useful results.

which is particularly poor given the financial impact this decision might have. when i do a renaming task of a 5000 token document, i don’t expect to seemingly require millions of tokens for a few renames. the documentation should EXPLAIN what the difference really is. do not hesitate to add actual examples with actual use cases

though i would genuinely be interested to hear where the tokens are coming from that cause the uptick in usage

so far, only the expensive one can rename for me. but even that is hit and miss as the selected document gets renamed to an older query/document etc.

so, you are doing extensive AI stuff with DT? do you mind expanding on what you do?

- The expenses are up to the individual to decide and keep an eye on, just as deciding what is reasonable.

- Development has made many modifications to keep token usage to a minimum but much of it is not under our control.

- It is impossible to add actual use cases in any static way, especially as the arms race causes prices to ascend and descend all the time.

PS: the used tokens are already shown in the AI > Chat settings.

Also…

in what way? the tokens used and how you prep the document/context falls under the purview of DT, no? the actual pricing is irrelevant as it is based on tokens.

Development would have to weigh in with any technical clarifications, but (1) no it’s not all under DEVONthink’s control, especially the responses, and (2) the documentation isn’t meant to be a technical manual of the exact, underlying mechanisms of any processes. We offer the general advice of using Auto as a balanced option but also allow people to choose a higher or lower tier.

I am a physician who evaluates cases in litigation due to injury or alleged medical malpractice. To do that I receive large volumes of PDF documents which can be structured or organized in any random form - typically 1,000 pages in length but sometimes into the tens of thousands of pages.

I developed some custom scripts which create a chronology of these documents. I do not use AI for authoring my reports nor can I rely on AI for facts about a case. But where it shines is its ability to summarize with hyperlinkls back to source pages so I can easily find information I am looking for.

So the scripts go through the documents and create summaries in various formats, such an executive summary of facts, list of diagnoses, list of treating providers, chronological list of events, and a chronological listing of diagnostic studies with links to source.

This type of organization of records historically has been done by nursing staff or secretarial staff who are meticulous in detail. If I look at a case where a nurse reviewer took maybe 10 hours to create a chronology, Claude 3.7 can do it in about 15-20 minutes for $3 to $5, with hyperlinks that the nurse reviewer cannot create (the nurse reviewer would typically just list page numbers).

Incidentally I throw into my scripts the question to Claude “Please identify any inconsistencies in these records.” Usually it points out minor things like an incorrect date. Sometimes it finds really important things like some records from a totally different patient that accidentally were included in the file given to me. And once in a while it finds a true medicolegally significant inconsistency that I might have missed otherwise.

None of this replaces my need to read the records in full. I do not use AI for authroring any reports. But these chronologies do help immensely when I look up details to cite in my reports as well as when I am questioned verbally during a deposition about a case.

This is likely not a typical DT4 use case - but it does show how versatile it can be for whatever your use may be - be it personal, professional, or anything else. Of course that is aside from all the other non-AI benefits of DT3, which I have used for many years now.

It can happen if you ask a query that requires a lengthy answer or you request a detailed answer.

It can particularly occur with Claude 3.7, which has an unusually generous 128,000 maximum output tokens. That compares wiht 8,192 output tokens for Claude 3.5.

Note that output tokens usually cost more than input tokens.

Thanks so much for the detailed write up. when you say scripts, what are we talking here? Automator, shell, actual programming?

I am surprised this works, never would have thought Claude can give you a chronology for example

Thanks, you are right. But in my case (so far) i have only used it to do renaming of documents ![]()

It is done via Applescript.

This is an example I made of an actual case in which all of the names, dates, locations, facility names, and other pertinent details have been changed and some facts have been changed so it is an anonymous / fictitous document simply for demonstration purposes. The actual working document is in Markdown which displays better in DT4 but you still get a good idea of it in PDF. The best feature is that the live document links to the actual pages in the records but obviously here the links do not work. Still it gives you an idea what Claude can do.

anonymized_medical_documents_summary_modified.pdf (684.0 KB)

But I am not suggesting you need to go to this extreme to benefit from DT4 - it’s great for much simpler projects too, which are its primary intent.

Exactly. See the icon for tool support in the model popup.

Indeed. What might work for one model does not necessarily work for other models. Frequently it’s even difficult to reproduce results. But a good prompt is usually the key to success or failure. Talk to the AI like a good teacher/employer would talk to their students/employees.

E.g. Summarize might sometimes deliver the results you’d expect and want. But Summarize the text of the selected PDF document. Return a table including the columns "Page", "Brief Description" and "Link" is better but it’s still a rather primitive prompt.

For automated renaming I would recommend to use the new placeholders for smart rules and batch processing. Renaming on demand via the chat assistant is definitely overkill.

i just had a look, what new placeholders would you suggest for renaming?

extracting the type of invoice (the AI seems to understand it) seems still extremely difficult with smart rules (maybe if regex is somehow supported?)