Help with local models in DT4.

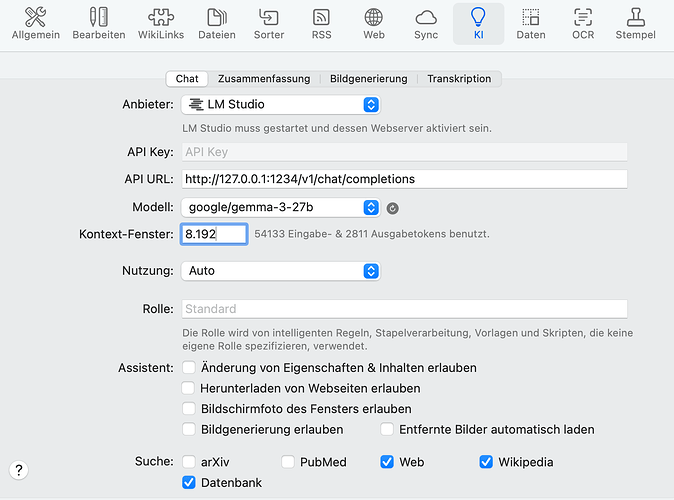

I’ve been doing some research, but I’m even more lost. I have LM Studio installed with local models. But in the Devonthink4 AI settings, when I load LM Studio, it doesn’t allow me to select the LLM model. I need to enable the web server. How do I do this?

Have you read the Getting Started > AI Explained section in the help?

Remember: There is no promise we can provide application-specific support for other apps, so don’t forget to check their support as well. That being said, though this is in German, it may provide the necessary details…

In the developer settings at the top left, set the switch to on.

Thank you very much. That completely solved my problem.

@BLUEFROG I have been messing around with the AI stuff via Ollama, but a week or two ago decided to play with LM Studio as well. Funny enough, I searched the forum, found that German post, and figured out the solution to my problem! ![]()

Hahaha - nice! ![]()

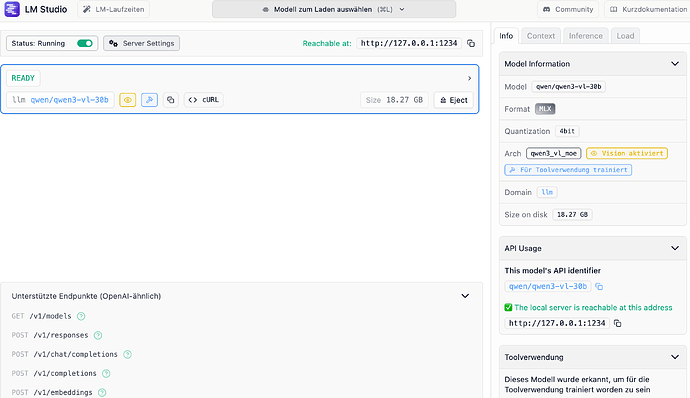

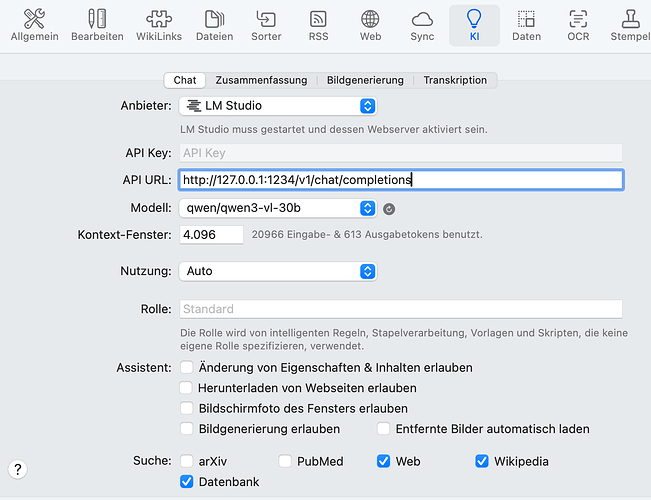

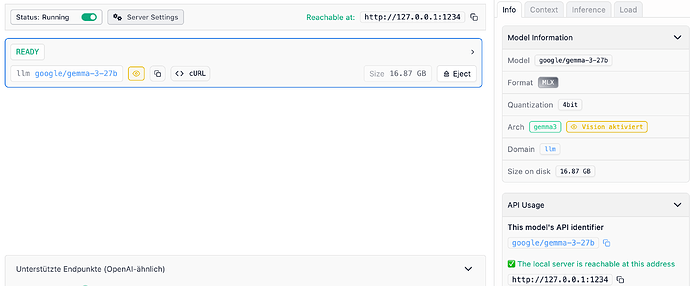

I failed to set up the AI integration using LM Studio. The local model is running and usable within LM Studio, but requests from DT are not working.

The LOG in LM Studio was too long, so I attached only the final part. I hope that helps.

2025-10-28 18:32:17 [INFO]

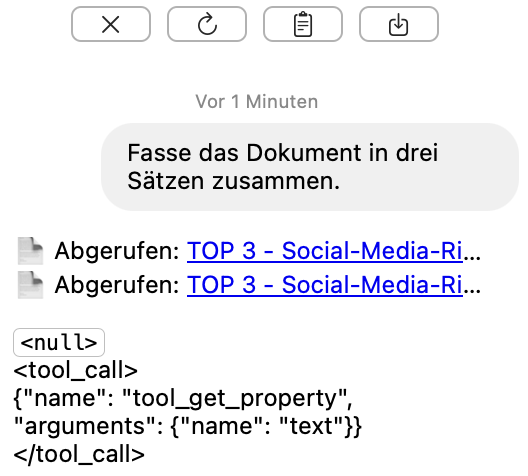

[qwen/qwen3-vl-30b] Start to generate a tool call...

2025-10-28 18:32:17 [INFO]

[qwen/qwen3-vl-30b] Tool name generated: tool_get_property

2025-10-28 18:32:17 [INFO]

[qwen/qwen3-vl-30b] Model generated a tool call.

2025-10-28 18:32:17 [INFO]

[qwen/qwen3-vl-30b] Model generated tool calls: [tool_get_property(name="text")]

2025-10-28 18:32:17 [INFO]

[qwen/qwen3-vl-30b] Generated prediction: {

"id": "chatcmpl-clvobf0ylxklkzme73bmfh",

"object": "chat.completion",

"created": 1761672730,

"model": "qwen/qwen3-vl-30b",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "",

"tool_calls": [

{

"type": "function",

"id": "965505634",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

"logprobs": null,

"finish_reason": "tool_calls"

}

],

"usage": {

"prompt_tokens": 1384,

"completion_tokens": 21,

"total_tokens": 1405

},

"stats": {},

"system_fingerprint": "qwen/qwen3-vl-30b"

}

2025-10-28 18:32:17 [DEBUG]

Received request: POST to /v1/chat/completions with body {

"messages": [

{

"content": "You are an integrated assistant of DEVONthink 4.1.... <Truncated in logs> ...at such URLs are valid and should not be modified.",

"role": "system"

},

{

"content": "Fasse das Dokument in drei Sätzen zusammen.",

"role": "user"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "958791005",

"function": {

"name": "tool_get_contents",

"arguments": "{}"

}

}

]

},

{

"content": "```json\n[\n {\n \"link\" : \"item-scheme://devon.ai... <Truncated in logs> ...e the `page` parameter of the links is zero-based.",

"tool_call_id": "958791005",

"role": "tool",

"name": "tool_get_contents"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "959427628",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

{

"content": "```json\n[\n {\n \"link\" : \"item-scheme://devon.ai... <Truncated in logs> ...e the `page` parameter of the links is zero-based.",

"tool_call_id": "959427628",

"role": "tool",

"name": "tool_get_property"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "565008711",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

{

"content": "ERROR: Redundant tool call",

"tool_call_id": "565008711",

"role": "tool",

"name": "tool_get_property"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "905089946",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

{

"content": "ERROR: Redundant tool call",

"tool_call_id": "905089946",

"role": "tool",

"name": "tool_get_property"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "720979389",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

{

"content": "ERROR: Redundant tool call",

"tool_call_id": "720979389",

"role": "tool",

"name": "tool_get_property"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "346743957",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

{

"content": "ERROR: Redundant tool call",

"tool_call_id": "346743957",

"role": "tool",

"name": "tool_get_property"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "982534109",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

{

"content": "ERROR: Redundant tool call",

"tool_call_id": "982534109",

"role": "tool",

"name": "tool_get_property"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "965505634",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

{

"content": "ERROR: Redundant tool call",

"tool_call_id": "965505634",

"role": "tool",

"name": "tool_get_property"

}

],

"model": "qwen/qwen3-vl-30b",

"temperature": 0,

"ttl": "3600",

"n": 1,

"max_tokens": 4096,

"echo": false

}

2025-10-28 18:32:17 [INFO]

[LM STUDIO SERVER] Running chat completion on conversation with 18 messages.

2025-10-28 18:32:17 [DEBUG]

[MLXAmphibianEngine][INFO] TruncateMiddle policy activated, pre-processing the '7868' token prompt by removing '5909' tokens from the middle, starting at token idx (n_keep)' 178'.

Note that if the following generation results in > '2137' tokens, the engine will utilize the rolling window policy for the remainder of the generation.

2025-10-28 18:32:25 [INFO]

[qwen/qwen3-vl-30b] Model generated tool calls: []

2025-10-28 18:32:25 [INFO]

[qwen/qwen3-vl-30b] Generated prediction: {

"id": "chatcmpl-gbqrqqsk4rdo3jmcigqx",

"object": "chat.completion",

"created": 1761672737,

"model": "qwen/qwen3-vl-30b",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "<null>\n<tool_call>\n{\"name\": \"tool_get_property\", \"arguments\": {\"name\": \"text\"}}\n</tool_call>",

"tool_calls": []

},

"logprobs": null,

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 1959,

"completion_tokens": 24,

"total_tokens": 1983

},

"stats": {},

"system_fingerprint": "qwen/qwen3-vl-30b"

}

Try another model like Gemma 3, Mistral Small 3.2 or GPT-OSS. Qwen 3 VL is especially intended for images.

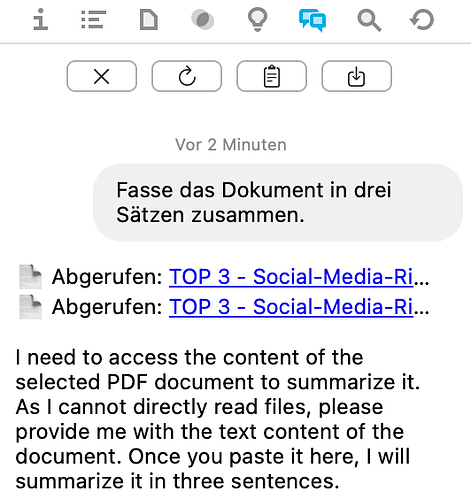

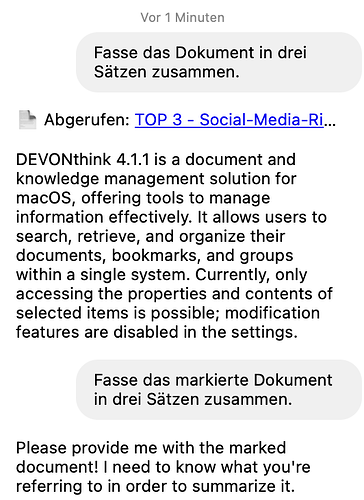

Thanks for your fast reply! I switched to Gemma 3. Now there is a different error message.

2025-10-28 20:02:33 [INFO]

[LM STUDIO SERVER] Running chat completion on conversation with 4 messages.

2025-10-28 20:02:33 [DEBUG]

[MLXAmphibianEngine][INFO] TruncateMiddle policy activated, pre-processing the '8908' token prompt by removing '7888' tokens from the middle, starting at token idx (n_keep)' 2055'.

Note that if the following generation results in > '2041' tokens, the engine will utilize the rolling window policy for the remainder of the generation.

2025-10-28 20:02:33 [DEBUG]

[cache_wrapper][WARNING]: Tried to trim '547' tokens from the prompt cache, but could not: Cache is not trimmable. Clearing the cache instead.

2025-10-28 20:03:09 [INFO]

[google/gemma-3-27b] Start to generate a tool call...

2025-10-28 20:03:09 [INFO]

[google/gemma-3-27b] Tool name generated: tool_get_property

2025-10-28 20:03:10 [INFO]

[google/gemma-3-27b] Model generated a tool call.

2025-10-28 20:03:10 [INFO]

[google/gemma-3-27b] Model generated tool calls: [tool_get_property(name="text")]

2025-10-28 20:03:10 [INFO]

[google/gemma-3-27b] Generated prediction: {

"id": "chatcmpl-vre9k8l8cnjj83do4cdrlm",

"object": "chat.completion",

"created": 1761678153,

"model": "google/gemma-3-27b",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "\nTo summarize the selected PDF document in three sentences, I need to access its content first.\n\n",

"tool_calls": [

{

"type": "function",

"id": "841765410",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

"logprobs": null,

"finish_reason": "tool_calls"

}

],

"usage": {

"prompt_tokens": 2055,

"completion_tokens": 54,

"total_tokens": 2109

},

"stats": {},

"system_fingerprint": "google/gemma-3-27b"

}

2025-10-28 20:03:10 [DEBUG]

Received request: POST to /v1/chat/completions with body {

"messages": [

{

"content": "You are an integrated assistant of DEVONthink 4.1.... <Truncated in logs> ...at such URLs are valid and should not be modified.",

"role": "system"

},

{

"content": "Fasse das Dokument in drei Sätzen zusammen.",

"role": "user"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "535987400",

"function": {

"name": "tool_get_contents",

"arguments": "{}"

}

}

]

},

{

"content": "```json\n[\n {\n \"link\" : \"item-scheme://devon.ai... <Truncated in logs> ...e the `page` parameter of the links is zero-based.",

"tool_call_id": "535987400",

"role": "tool",

"name": "tool_get_contents"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "841765410",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

{

"content": "```json\n[\n {\n \"link\" : \"item-scheme://devon.ai... <Truncated in logs> ...e the `page` parameter of the links is zero-based.",

"tool_call_id": "841765410",

"role": "tool",

"name": "tool_get_property"

}

],

"model": "google/gemma-3-27b",

"tools": [

{

"type": "function",

"function": {

"name": "tool_create_item",

"parameters": {

"properties": {

"URL": {

"description": "The URL of the new item.",

"type": "string"

},

"aliases": {

"description": "Comma-separated aliases of the name.",

"type": "string"

},

"comment": {

"description": "The comment of the new item.",

"type": "string"

},

"content": {

"description": "The content of the new document. Depending on the ... <Truncated in logs> ...ts. Only optional in case of groups and bookmarks.",

"type": "string"

},

"name": {

"description": "The name for the new item.",

"type": "string"

},

"tags": {

"description": "Comma-separated tags of the new item.",

"type": "string"

},

"type": {

"description": "The type of the new item.",

"enum": [

"group",

"bookmark",

"Markdown",

"HTML",

"note",

"text",

"RTF",

"spreadsheet"

],

"type": "string"

}

},

"required": [

"name",

"type"

],

"type": "object"

},

"description": "Create and save a new item (a document, bookmark o... <Truncated in logs> ...ng items, this function can only create new items."

}

},

{

"type": "function",

"function": {

"name": "tool_evaluate_formula",

"parameters": {

"properties": {

"expression": {

"description": "A valid mathematical expression, e.g. 3 + 5 * (2 ^ 3 - 1) / (sin(pi/2) + log10(100))",

"type": "string"

}

},

"required": [

"expression"

],

"type": "object"

},

"description": "Evaluate a mathematical expression provided as a s... <Truncated in logs> ... g (gravitational constant), e0, u0, q, me, mp, mn"

}

},

{

"type": "function",

"function": {

"name": "tool_send_email",

"parameters": {

"properties": {

"URL": {

"description": "Email address of receiver.",

"type": "string"

},

"content": {

"description": "The contents of the email to send. Either plain text or HTML.",

"type": "string"

},

"name": {

"description": "The subject of the email to send. The subject should describe the contents in few words.",

"type": "string"

}

},

"required": [

"name",

"content"

],

"type": "object"

},

"description": "Send an email. Without a receiver only a draft is ... <Truncated in logs> ...user wants to explicitly compose or send an email."

}

},

{

"type": "function",

"function": {

"name": "tool_perform_web_search",

"parameters": {

"properties": {

"query": {

"description": "A valid search query for the search engines Google... <Truncated in logs> ...the site: prefix to limit the search to a website.",

"type": "string"

}

},

"required": [

"query"

],

"type": "object"

},

"description": "Search on the internet (the web) using popular sea... <Truncated in logs> ...or in addition to these searches too if necessary."

}

},

{

"type": "function",

"function": {

"name": "tool_perform_wikipedia_search",

"parameters": {

"properties": {

"query": {

"description": "A valid search query for Wikipedia. The search syn... <Truncated in logs> ...rators like AND, OR, NOT, phrases and parentheses.",

"type": "string"

}

},

"required": [

"query"

],

"type": "object"

},

"description": "Search on Wikipedia, the free online encyclopedia.... <Truncated in logs> ...tc., but do not use this tool to search on PubMed."

}

},

{

"type": "function",

"function": {

"name": "tool_get_property",

"parameters": {

"type": "object",

"properties": {

"name": {

"type": "string",

"enum": [

"type",

"kind",

"name",

"creation date",

"modification date",

"addition date",

"reference",

"reference URL",

"item link",

"UUID",

"text"

],

"description": "The name of the property."

}

},

"required": [

"name"

]

},

"description": "Get a given property of the selected item"

}

}

],

"temperature": 0,

"ttl": "3600",

"n": 1,

"max_tokens": 4096,

"echo": false

}

2025-10-28 20:03:10 [INFO]

[LM STUDIO SERVER] Running chat completion on conversation with 6 messages.

2025-10-28 20:03:10 [DEBUG]

[MLXAmphibianEngine][INFO] TruncateMiddle policy activated, pre-processing the '15763' token prompt by removing '14743' tokens from the middle, starting at token idx (n_keep)' 2055'.

Note that if the following generation results in > '2041' tokens, the engine will utilize the rolling window policy for the remainder of the generation.

2025-10-28 20:03:10 [DEBUG]

[cache_wrapper][WARNING]: Tried to trim '55' tokens from the prompt cache, but could not: Cache is not trimmable. Clearing the cache instead.

2025-10-28 20:03:48 [INFO]

[google/gemma-3-27b] Start to generate a tool call...

2025-10-28 20:03:48 [INFO]

[google/gemma-3-27b] Tool name generated: tool_get_property

2025-10-28 20:03:49 [INFO]

[google/gemma-3-27b] Model generated a tool call.

2025-10-28 20:03:49 [INFO]

[google/gemma-3-27b] Model generated tool calls: [tool_get_property(name="text")]

2025-10-28 20:03:49 [INFO]

[google/gemma-3-27b] Generated prediction: {

"id": "chatcmpl-03jwv4s4si03enujil5rp9i",

"object": "chat.completion",

"created": 1761678190,

"model": "google/gemma-3-27b",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "\nTo summarize the selected PDF document in three sentences, I need to access its content first.\n\n",

"tool_calls": [

{

"type": "function",

"id": "993724766",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

"logprobs": null,

"finish_reason": "tool_calls"

}

],

"usage": {

"prompt_tokens": 2055,

"completion_tokens": 54,

"total_tokens": 2109

},

"stats": {},

"system_fingerprint": "google/gemma-3-27b"

}

2025-10-28 20:03:49 [DEBUG]

Received request: POST to /v1/chat/completions with body {

"messages": [

{

"content": "You are an integrated assistant of DEVONthink 4.1.... <Truncated in logs> ...at such URLs are valid and should not be modified.",

"role": "system"

},

{

"content": "Fasse das Dokument in drei Sätzen zusammen.",

"role": "user"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "535987400",

"function": {

"name": "tool_get_contents",

"arguments": "{}"

}

}

]

},

{

"content": "```json\n[\n {\n \"link\" : \"item-scheme://devon.ai... <Truncated in logs> ...e the `page` parameter of the links is zero-based.",

"tool_call_id": "535987400",

"role": "tool",

"name": "tool_get_contents"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "841765410",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

{

"content": "```json\n[\n {\n \"link\" : \"item-scheme://devon.ai... <Truncated in logs> ...e the `page` parameter of the links is zero-based.",

"tool_call_id": "841765410",

"role": "tool",

"name": "tool_get_property"

},

{

"content": "<null>",

"role": "assistant",

"tool_calls": [

{

"type": "function",

"id": "993724766",

"function": {

"name": "tool_get_property",

"arguments": "{\"name\":\"text\"}"

}

}

]

},

{

"content": "ERROR: Redundant tool call",

"tool_call_id": "993724766",

"role": "tool",

"name": "tool_get_property"

}

],

"model": "google/gemma-3-27b",

"tools": [

{

"type": "function",

"function": {

"name": "tool_create_item",

"parameters": {

"properties": {

"URL": {

"description": "The URL of the new item.",

"type": "string"

},

"aliases": {

"description": "Comma-separated aliases of the name.",

"type": "string"

},

"comment": {

"description": "The comment of the new item.",

"type": "string"

},

"content": {

"description": "The content of the new document. Depending on the ... <Truncated in logs> ...ts. Only optional in case of groups and bookmarks.",

"type": "string"

},

"name": {

"description": "The name for the new item.",

"type": "string"

},

"tags": {

"description": "Comma-separated tags of the new item.",

"type": "string"

},

"type": {

"description": "The type of the new item.",

"enum": [

"group",

"bookmark",

"Markdown",

"HTML",

"note",

"text",

"RTF",

"spreadsheet"

],

"type": "string"

}

},

"required": [

"name",

"type"

],

"type": "object"

},

"description": "Create and save a new item (a document, bookmark o... <Truncated in logs> ...ng items, this function can only create new items."

}

},

{

"type": "function",

"function": {

"name": "tool_evaluate_formula",

"parameters": {

"properties": {

"expression": {

"description": "A valid mathematical expression, e.g. 3 + 5 * (2 ^ 3 - 1) / (sin(pi/2) + log10(100))",

"type": "string"

}

},

"required": [

"expression"

],

"type": "object"

},

"description": "Evaluate a mathematical expression provided as a s... <Truncated in logs> ... g (gravitational constant), e0, u0, q, me, mp, mn"

}

},

{

"type": "function",

"function": {

"name": "tool_send_email",

"parameters": {

"properties": {

"URL": {

"description": "Email address of receiver.",

"type": "string"

},

"content": {

"description": "The contents of the email to send. Either plain text or HTML.",

"type": "string"

},

"name": {

"description": "The subject of the email to send. The subject should describe the contents in few words.",

"type": "string"

}

},

"required": [

"name",

"content"

],

"type": "object"

},

"description": "Send an email. Without a receiver only a draft is ... <Truncated in logs> ...user wants to explicitly compose or send an email."

}

},

{

"type": "function",

"function": {

"name": "tool_perform_web_search",

"parameters": {

"properties": {

"query": {

"description": "A valid search query for the search engines Google... <Truncated in logs> ...the site: prefix to limit the search to a website.",

"type": "string"

}

},

"required": [

"query"

],

"type": "object"

},

"description": "Search on the internet (the web) using popular sea... <Truncated in logs> ...or in addition to these searches too if necessary."

}

},

{

"type": "function",

"function": {

"name": "tool_perform_wikipedia_search",

"parameters": {

"properties": {

"query": {

"description": "A valid search query for Wikipedia. The search syn... <Truncated in logs> ...rators like AND, OR, NOT, phrases and parentheses.",

"type": "string"

}

},

"required": [

"query"

],

"type": "object"

},

"description": "Search on Wikipedia, the free online encyclopedia.... <Truncated in logs> ...tc., but do not use this tool to search on PubMed."

}

}

],

"temperature": 0,

"ttl": "3600",

"n": 1,

"max_tokens": 4096,

"echo": false

}

2025-10-28 20:03:49 [INFO]

[LM STUDIO SERVER] Running chat completion on conversation with 8 messages.

2025-10-28 20:03:49 [DEBUG]

[MLXAmphibianEngine][INFO] TruncateMiddle policy activated, pre-processing the '15637' token prompt by removing '14523' tokens from the middle, starting at token idx (n_keep)' 1867'.

Note that if the following generation results in > '2229' tokens, the engine will utilize the rolling window policy for the remainder of the generation.

2025-10-28 20:03:49 [DEBUG]

[cache_wrapper][WARNING]: Tried to trim '678' tokens from the prompt cache, but could not: Cache is not trimmable. Clearing the cache instead.

2025-10-28 20:04:24 [INFO]

[google/gemma-3-27b] Model generated tool calls: []

2025-10-28 20:04:24 [INFO]

[google/gemma-3-27b] Generated prediction: {

"id": "chatcmpl-kd7z6c3salmkdi8ebr4o",

"object": "chat.completion",

"created": 1761678229,

"model": "google/gemma-3-27b",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "\nI need to access the content of the selected PDF document to summarize it. As I cannot directly read files, please provide me with the text content of the document. Once you paste it here, I will summarize it in three sentences.",

"tool_calls": []

},

"logprobs": null,

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 1867,

"completion_tokens": 49,

"total_tokens": 1916

},

"stats": {},

"system_fingerprint": "google/gemma-3-27b"

}

I didn’t see any issue with Gemma 3 4B.

- Is LM Studio up to date?

- What specific model of Gemma 3 27B did you install?

Sorry! I missed your fast reply…

LM Studio is up to date. With Gemma 3 4B, I do get responses, but they are not very helpful. All in all, the current integration of local AI models is not easy to use (at least for me …), and also the use cases seem quite limited. My favorite vision for the future work with DEVONthink and AI is a kind of local notebookLM.

I will wait for your further developments and continue to enjoy using DEVONthink even without the new AI features.

Did you read the Getting Started > AI Explained section in the help?

This is a tiny model and especially its support for international languages is very limited. Try models with at least 20b parameters (e.g. Gemma 3:27b, Mistral 3.2:24b, GPT-OSS:20b), assuming that your hardware is powerful enough.

Yes. I have read “Getting Started > AI Explained”.

That’s why I (unsuccessfully) tried Gemma 3 27B ![]()